In a world where Artificial Intelligence (AI) is swiftly evolving, the race for creating more intelligent and autonomous systems is intensifying. Microsoft, a formidable player in this domain, has recently unveiled its AutoGen Framework, a pioneering platform that orchestrates interaction among multiple AI agents, aiming to streamline task execution.

AutoGen, an open-source Python library, is Microsoft’s stride into the realm of large language model (LLM) application frameworks. This framework is engineered to simplify the orchestration, optimization, and automation of workflows centered around LLMs like GPT-4. The spotlight is on the creation of "agents", which are essentially programming modules empowered by LLMs, and are designed to communicate with each other through natural language messages to accomplish diverse tasks.

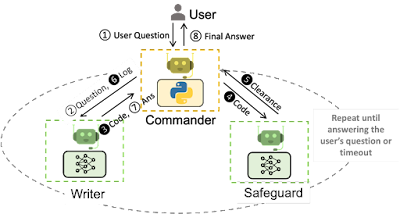

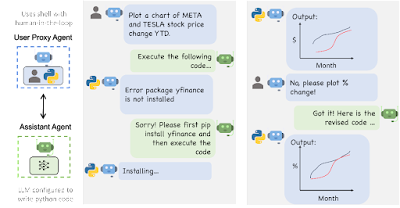

What makes AutoGen an enticing proposition is its modular architecture. Developers have the liberty to create an ecosystem of agents, each specializing in different tasks yet capable of cooperating seamlessly. Every agent is perceived as an individual ChatGPT session with its unique instruction set. For instance, one agent might take on the role of a programming assistant, generating Python code based on user requests, while another could act as a code reviewer, examining the code snippets and troubleshooting them. The response from the first agent can be seamlessly channeled as input to the second, creating a coherent workflow.

The framework also extends a layer of customization and augmentation through prompt engineering techniques and external tools. These augmentations enable agents to fetch information or execute code, broadening the spectrum of tasks they can handle.

A striking feature of AutoGen is the integration of “human proxy agents”, allowing users to dive into the conversation between AI agents. This feature morphs the human user into a team leader overseeing a group of AI agents, facilitating a higher degree of oversight and control especially in scenarios requiring sensitive decision-making.

Multi-agent collaborations under AutoGen can lead to substantial efficiency gains. As per Microsoft's claims, AutoGen has the potential to accelerate coding processes by up to four times, showcasing a promising avenue for reducing developmental timelines.

Furthermore, AutoGen supports more complex scenarios through hierarchical arrangements of LLM agents, bringing a new dimension to multi-agent interactions. For instance, a group chat manager agent could mediate discussions between multiple human users and LLM agents, ensuring effective communication according to predefined rules.

As the arena of LLM application frameworks burgeons, AutoGen is squaring up against many contenders. However, what sets it apart is its emphasis on creating a collaborative environment where multiple AI agents, with a sprinkle of human intervention, can collectively drive task completion to new heights.

Despite the challenges such as hallucinations and unpredictable behaviors from LLM agents, the horizon looks promising. The evolution of LLM agents is poised to play a crucial role in the future of application development and operational systems. With AutoGen, Microsoft is not only embracing the competitive spirit of this fast-evolving field but is also laying down a robust foundation for the futuristic vision of harmonized AI-human interactions.

No comments:

Post a Comment